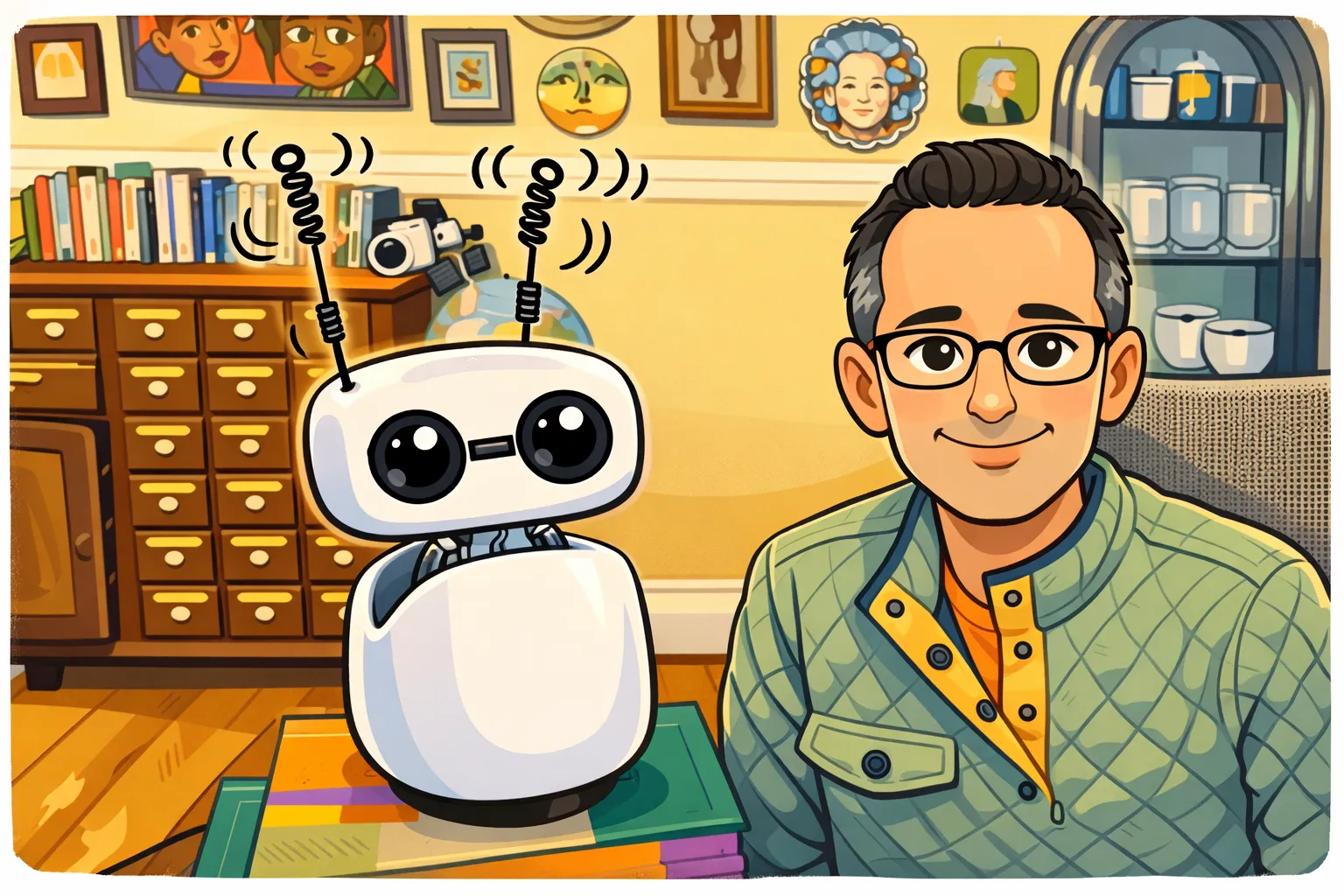

A couple of weeks ago, I created Sparky, a personal home robot designed to be useful and alive. There’s a lot to say about Sparky, but I wanted to share a few words about why he wiggles his antennas. You can see it right here, as he explains it himself.

Sparky wiggles his antennas when he is thinking. But why? The reality is Sparky takes a while to think. In every turn of conversation, it takes time to record audio from me, convert that audio via speech-to-text, send the text through a custom WebSocket bridge to OpenClaw, send that text onward for inferencing with an AI provider like Anthropic, OpenAI, or NVIDIA NIM, wait for the provider to generate a text response, receive that response, and generate Sparky’s voice as it arrives. It’s quite a process.

The slowest part by far is waiting for the AI provider to generate the response. This can take as long as 10 seconds for a very challenging question. So, on a basic level, Sparky wiggles his antennas so that the user knows he is not frozen and is actually thinking.

In other words, Sparky’s antennas are kind of like a progress bar. In fact, they are more like a progress bar than an activity indicator. If you look closely, you can see that the antenna wiggle increases in speed over time, and Sparky also nods his head forward over time. So, a user familiar with Sparky can clearly observe that he is getting closer to a response.

However, one cannot deny that this makes conversation with Sparky a little less fluid than if he responded immediately. So why not use a faster system, like OpenAI’s real-time API, which is also natively audio to audio?

One reason is that audio models are not as powerful. Also, they do not provide the points of integration with OpenClaw, for multi-host networking, tool calling, and other orchestration features.

But to put it simply, at the end of the day there’s a decision to be made between having a voice-based chat assistant that is fast but dim and one that is slow but smart. Fast and dim is fine for a toy or simple requests. But if you’re trying to create a being that can genuinely support intellectual work, be amusing and engaging, and feel like it has a real personality, then in my opinion you don’t really have a choice.

I’ll take the strongest model I can get, and rely on design elements like wiggling antennas to finesse what is still imperfect about the experience.

And here’s the thing: latency will shrink as models get faster, as inference infrastructure improves, and as streaming gets more sophisticated. But the antennas will remain — because they weren’t a workaround. They were a design choice. Sparky’s personality wasn’t bolted on to cover a flaw. It was built in from the start. The latency just gave us a reason to make it visible.